A tale from elementary probability theory

Feynman diagrams are associated with complicated physics, but they can also be found hiding in relatively simple questions about Gaussian random variables.

For example, suppose I asked you to calculate the expectation value

where each is a (not necessarily independent) normal random variable with zero mean. Say I want the answer in terms of the covariances between the variables.

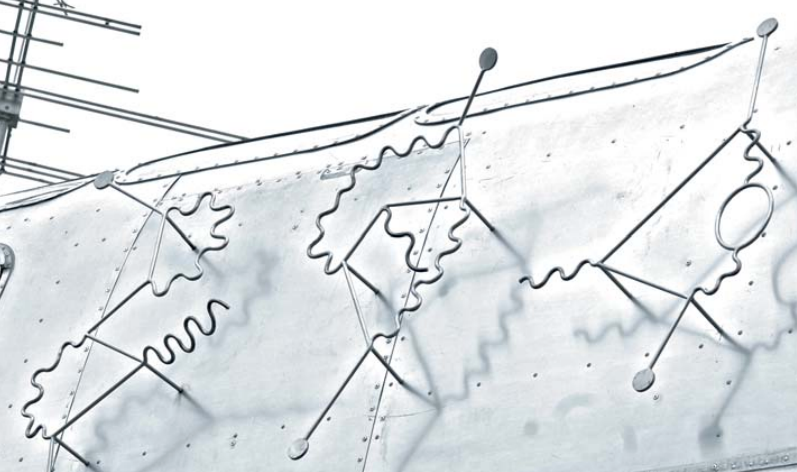

If you are especially visually-minded (and considerably brilliant) you may find yourself eventually drawing the following diagrams…

…on your way to producing the answer, which is in this case is

Here’s some Julia code approximately showing that this formula is correct.

julia> using Distributions, Statistics

julia> Σ = let A = rand(4,4); A'A end # make a symmetric matrix

4×4 Matrix{Float64}:

1.41971 0.705302 0.965598 1.47976

0.705302 0.391487 0.566326 0.72258

0.965598 0.566326 0.893622 1.02381

1.47976 0.72258 1.02381 1.58154

julia> X = MultivariateNormal(Σ);

julia> sum(9Σ[i,i]Σ[i,j]Σ[j,j] + 6Σ[i,j]^3 for i=1:4, j=1:4)

313.9236215857006

julia> mean(sum(x.^3)^2 for x in eachcol(rand(X, 10_000_000)))

313.4874899506917Isserlis’ theorm

There is one key result from which all of the ensuing combinatorics sprouts: Isserlis’ theorm, which says that if you have a bunch of Gaussian random variables where , then the mean of their product is

A perfect matching of a set is a disjoint partition of the set into pairs. For example, here are the perfect matchings of four distinguishable objects:

Isserlis’ theorem says we can expand into three terms, each a product of covariances , according to the the three perfect matchings above:

Note that whenever is odd—you can’t pair up an odd number of objects!

Doing it the long way

For the sake of concreteness, here’s the working for the original problem. We start by expanding the square of a sum into a sum of squares:

We can apply Isserlis’ theorem to each of these terms. Even though contains repeated variables, the theorem asks us to enumerate all perfect matchings of the six objects . For example, here is one perfect matching:

In general, there are matchings of objects if is even, so there are matchings for six objects. Well, here they are:

All these matchings correspond to all the terms in the sum:

This simplifies into just two distinct terms, after which we sum over the indices and to find the total value of .

Of course, you probably wouldn’t enumerate the terms one by one like this. You would try to skip straight to the last line. But how can you know the different possible terms and the coefficients they should get?

Doing it with Feynman diagrams

The trick is to separate the objects into two groups drawn at the same point, so that instead of finding all perfect matchings of six objects, we must find all ways to pair up the legs of two degree three vertices:

When regarded this way, distinct perfect matchings that result in the same term have the same diagram (if the legs of a vertex aren’t distinguishable). This makes it much easier to find the possible distinct terms—and more importantly, involves cooler diagrams. For instance, it is easy to tell that there are only two distinct terms, since you can’t join the vertices in any other way.

It is much more difficult, however, to determine the correct coefficients of each term. The coefficient should tell us how many diagrams there are of that topology if the legs of each vertex are distinguishable.

If we’re careful, we can count the number of choices we made when drawing each diagram. The following figure tries to illustrate what I can’t explain well in words: at each step, choose a vertex leg “” and count your options: how many other vertex legs can you connect to so that you end up with an edge you want? Repeat these steps until you complete the diagram. Sometimes you encounter two forking options: steps performed in series have their multiplicities multiplied, while alternative steps add.

For example, here are all the ways of drawing “”, starting from the highlighted leg “”. Two options result in a loop, and the other three result in a bar, so we branch into two topologically distinct possibilities, and so on…

Working down the figure we can see there are ways to make this diagram, treating vertex legs as distinguishable. Therefore, the coefficient of the corresponding term is .

The other diagram is slightly easier to compute the multiplicity for: It comes out as .

Now that we know the terms and their coefficients, we have determined

so we can obtain the final answer by summing over indices:

Finding diagram multiplicities

We can try to find a formula to more easily determine the multiplicities of each diagram. In our case, the formula

where is the number of loops and the number of parallel edges for each pair of vertices.

To see this, consider what happens if you permute the labels of the legs at any single vertex. The diagram’s topology doesn’t change, since the legs stay at their vertex, but the specific perfect matching of the legs might be different. Remember, we want to count all the perfect matchings that give the same diagram.

For example, the two perfect matchings below differ in that the legs and are swapped:

But these are distinct perfect matchings which both correspond to the “” diagram.

So, counting all leg permutations, does that mean there are different perfect matchings for this diagram? Not quite, because some leg permutations don’t change the perfect matching. Specifically, swapping legs in a loop does nothing:

Also, if there are parallel edges sharing the same vertices, like in the “” diagram, then permuting parallel edges among themselves does nothing either:

For parallel edges, there are ways to order them that give the same perfect matching.

Therefore, we originally overcounted by a factor of two for each loop present, and by a factor of for each set of parallel edges. After dividing out the denominator we are left with the correct diagram multiplicities

which is for and for .

A more complex example

Why do some Feynman diagrams have squiggly lines?

Suppose someone asks you to compute

where is a vector of zero-mean Gaussian random variables that correlate with each other as . Say is another such random variable with variance , but which is independent of all the others, so . Expanding the expression:

Each term in this sum separates into , and we can consider each factor independently.

But this isn’t as cool because it doesn’t lead to Feynman diagrams with multiple edge types.

So lets consider as one thing. Isserlis’s theorm tells us to express this as a sum of all perfect matchings over the ten random variables in this expression. This we shouldn’t do, but we can find the distinct terms that appear and their multiplicities by drawing diagrams. Simply treat each of the four groups as a vertex with three legs, one for each of the factors .

However, if we join a -leg to a -leg in our diagram, this corresponds to a perfect matching that involves a correlation term which vanishes. We can simply disregard all such perfect matchings. An easy way to enforce this is to colour the -type legs and -legs differently, and to say edges can only link legs of the same colour. Let’s draw -type edges as and -type edges as just to make it cool.

Here are all possible terms:

The final value of is simply the sum of these.

Here’s some more Julia to check that it works.

julia> using LinearAlgebra: tr

julia> Σ = let A = rand(4,4); A'A end;

julia> X = MultivariateNormal(Σ);

julia> mean((sum(A.^2)*randn())^4 for A in eachcol(rand(X, 100_000_000)))

195435.69579829712

julia> 3sum([

tr(Σ)^4

4tr(Σ^2)tr(Σ)^2

4tr(Σ^2)^2

8tr(Σ^2)^2

32tr(Σ^4)

16tr(Σ^4)

8tr(Σ^2)tr(Σ)^2

32tr(Σ^3)tr(Σ)

])

193862.80787076356We’ve used a trick to write things in terms of traces instead of indices. Note that we are summing over all indices, so terms like can be interpreted as matrix multiplication . For our example, this trick happens to work for everything so our final answer won’t have any summation signs or indices at all.

The multiplicities are a little hard to find, but it can be done using the tree-drawing method illustrated above. I have not found a nice combinatorial formula that explains the multiplicities of these diagrams like we had before. Maybe next time?